Running large language models locally is no longer just a hobby for machine learning engineers—it has evolved into an architectural standard for privacy-first application development. Relying on remote, cloud-based AI providers means burning cash on token fees, accepting uncontrollable network latency, and piping your proprietary code and user data through third-party servers.

By executing models directly on your own hardware, you instantly resolve those bottlenecks. As of March 2026, the open-weight ecosystem has caught up, producing foundational models that rival the cloud titans of yesteryear.

Here is our definitive engineering guide to the top specialized engines for running AI locally, alongside a breakdown of the most powerful models currently dominating the market.

Why Migrate to Local LLMs in 2026?

Before we look at the tools, it's vital to understand the engineering shift driving local adoption. You shouldn't spin up a local Llama instance just because it's trendy; you should do it because it fundamentally solves enterprise constraints.

- Zero-Trust Privacy: Your local prompts, database schemas, and API keys remain physically air-gapped from corporate telemetry. If you are building medical, financial, or strict-compliance software, sending user data to a cloud API is an immediate security violation. Local models are literally the only solution.

- Predictable Unit Economics: Cloud APIs charge fractions of a cent per token. That scales horribly. If you build an autonomous logic-checking pipeline that evaluates millions of requests daily, you will bankrupt your project. With local inference, you pay the hardware cost upfront, and your monthly variable expenses remain at absolute zero.

- Zero Network Bottlenecks: Say goodbye to HTTP rate-limiting (e.g.,

429 Too Many Requests), heavy TLS handshakes, and sudden service outages. The model fires the exact instant you send the prompt to your localhost. - Complete Parametrization Control: You aren't boxed into rigid parameters. Open weights allow you to manipulate deep neural constraints, swap out system prompt structures effortlessly, and fine-tune models on domain-specific datasets (like proprietary legal documents) without uploading them to external clouds.

At a Glance: Top Local LLM Hardware & Software

Top 5 Local Inference Frameworks

| Tool | Primary Use Case | Engineering Highlight |

|---|---|---|

| Ollama | Seamless CLI & Background Daemons | Single-command deployment (ollama run) |

| LM Studio | Visual Model Management | Auto-checks hardware compatibility before download |

| Text Gen WebUI | Advanced Research & Tweaking | Supports massive bleeding-edge model formats |

| LocalAI | Dropping Cloud Dependencies | Perfectly mimics OpenAI's API endpoints |

| GPT4All | Casual CPU Execution | Heavily optimized to run on standard CPUs (no GPU needed) |

Top Heavyweight Models (Released Late 2025)

| Foundation Model | Developer | Best Use Case | Min. VRAM (Q4) | License |

|---|---|---|---|---|

| GPT-OSS (20B) | OpenAI | High-end Python/JS coding | ~16GB | Apache 2.0 |

| DeepSeek V3.2-Exp | DeepSeek | Mathematical "Thinking" logic | ~32GB+ | Custom |

| Qwen3-Omni | Alibaba | True Audio/Video multimodal | ~48GB+ | Tongyi Qianwen |

| Gemma 3 (9B) | Low-latency logical tasks | ~8GB | Gemma | |

| Llama 4 (70B) | Meta | Enterprise RAG backend | ~40GB+ | Llama 4 |

Deep Dive: The Top 5 Local Inference Engines

Which framework you choose dictates the friction curve of deploying models. Here are the clear winners in 2026.

1. Ollama (The Absolute Standard)

Ollama

Getting Started with Ollama:

- Install Ollama: You can download the desktop client from the official site, or use the curl command on Linux/macOS.

curl -fsSL https://ollama.com/install.sh | sh - Execute a Model: Need a quick coding assistant? Pull and run a model (like Llama) immediately. Ollama handles the downloading and GPU mapping for you.

ollama run llama4

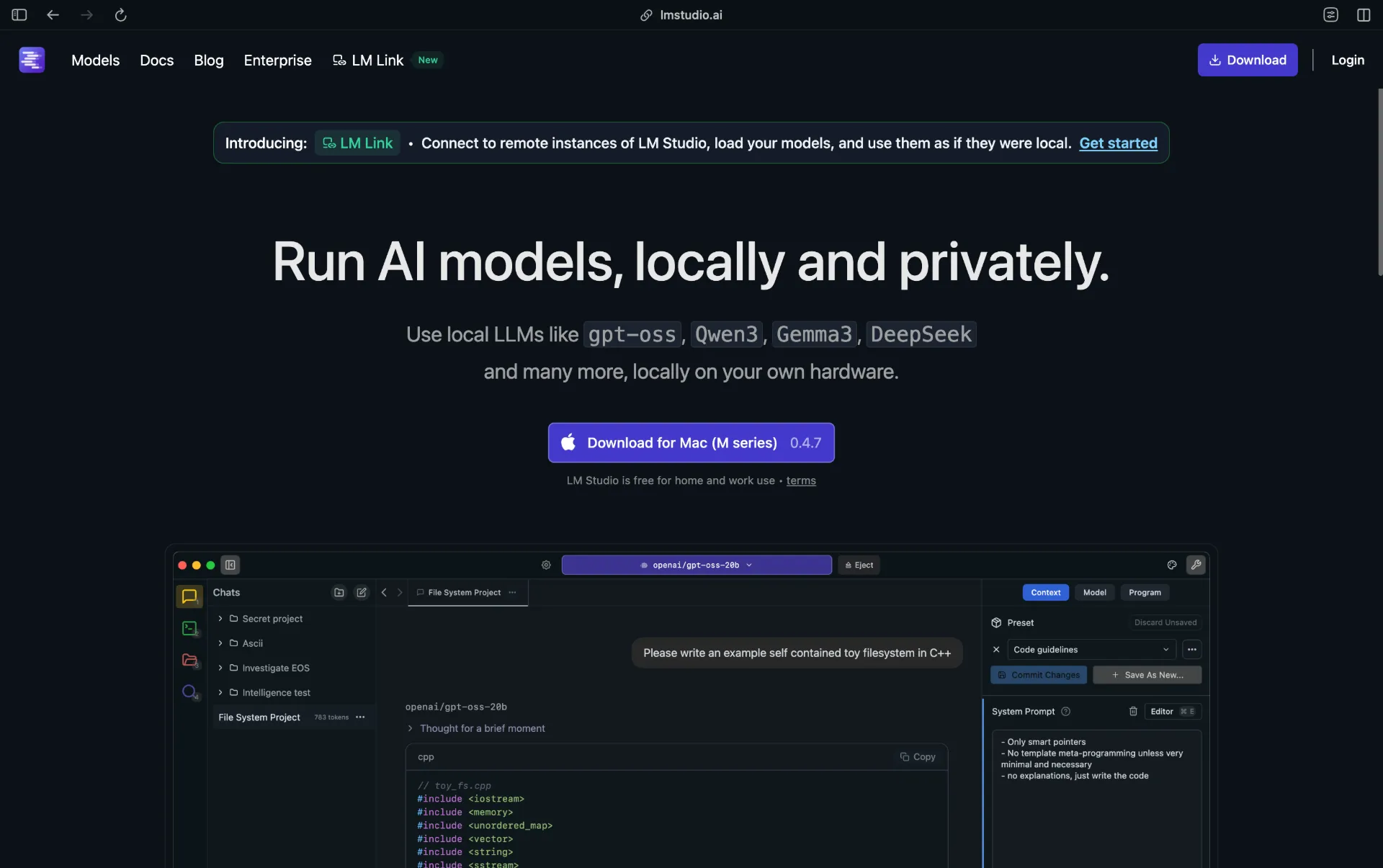

2. LM Studio (The Best GUI)

LM Studio

Getting Started with LM Studio:

- Download: Navigate to lmstudio.ai and grab the executable for your OS.

- Search Inside the App: Use the built-in search bar to type a model name (e.g., "Gemma 3").

- Execute: Click download on the compatible quantization and click "Start Server" on the left-side tab to instantly host an OpenAI-compatible API locally.

3. Text Generation WebUI (For the Power User)

This is the Linux power-user approach to LLMs. While Ollama deliberately hides complex parameters to ensure stability, Text Generation WebUI (Oobabooga) exposes every single knob and dial of the inference stack. It is almost always the very first platform to support newly formatted model types (AWQ, EXL2).

Getting Started with Text Gen WebUI:

- Clone the Repository:

Bash

git clone https://github.com/oobabooga/text-generation-webui cd text-generation-webui - Launch the Automated Script: Run the bash/batch script for your operating system. It will automatically build the conda environment and download the necessary PyTorch dependencies.

Bash

./start_linux.sh # (use start_windows.bat or start_macos.sh depending on your OS)

4. LocalAI (The Deception Engine)

LocalAI

Getting Started with LocalAI:

- Boot via Docker: The easiest deployment method for local staging is spinning it up inside a Docker container attached to port 8080.

Bash

docker run -p 8080:8080 -v $PWD/models:/models -ti --rm quay.io/go-skynet/local-ai:latest --models-path /models - Hit the API: Take your existing Python/JS software, change the base OpenAI URL to

http://localhost:8080, and your code runs natively via your local GPU.

5. GPT4All (The Desktop Casual)

GPT4All

Getting Started with GPT4All:

- Download the Client: Grab the standalone app directly from nomic.ai/gpt4all.

- Install (No Terminal Required): Run the executable framework.

- Select a Model: Open the GUI and select a smaller model recommended for CPU logic, and you can begin prompting immediately like a native ChatGPT window.

"Bonus - Jan.ai: We also highly recommend Jan, which serves as a stunning, 100% offline desktop alternative to ChatGPT. It operates with a strict philosophy against telemetry and data harvesting.

The Heavyweights: Breakout Models

The timeline of AI advancement accelerated massively last year. Here are the breakthrough models that launched in the second half of 2025 and are actively dominating the local landscape right now.

1. GPT-OSS (20B & 120B)

OpenAI GPT-OSS

2. DeepSeek V3.2-Exp

DeepSeek V3.2

3. Qwen3-Next & Qwen3-Omni

Qwen3

4. Gemma 3 Family

Gemma 3

5. Llama 4

Llama 4

Production Hardware Realities: Math You Cannot Ignore

It doesn't matter how clever your Python wrapping logic is; VRAM (Video RAM) is the ultimate strict physical chokepoint for modern AI. A good rule of thumb for standard Q4 Quantization is ~0.6GB to 0.7GB of VRAM per 1 Billion Parameters.

- Small Edge Models (3B - 9B): You can comfortably survive on consumer gaming hardware. A bare minimum of 16GB unified memory (Apple Silicon M-Series) or an NVIDIA GPU with 8GB-12GB of VRAM (like an RTX 3060) is completely sufficient.

- Mid-Tier Enterprise (20B - 35B): You need serious dedicated horsepower. Prepare to wire up 32GB+ of system memory, or preferably, a flagship consumer rig like the RTX 4090 with 24GB VRAM.

- Heavyweight Clusters (70B+ Models): Say goodbye to a single PC setup. Running a 70B model or the massive GPT-OSS 120B efficiently requires splitting layer inference across dual RTX 4090s, Mac Studio Ultras, or dropping $10,000+ on explicit server configurations (A100 grids).

Conclusion

The golden age of open-weight computing has officially arrived. Between the seamless containerized orchestration provided by open-source tools like Ollama and LocalAI, and the raw logical horsepower of models like DeepSeek V3.2-Exp and GPT-OSS, cloud dependencies have finally been rendered optional.

Review the VRAM constraints of your deployment hardware, pick a framework, pull a lightweight model like Gemma 3 or Llama 4 off Hugging Face, and build your next AI feature with uncompromised privacy and zero token fees.